Workload Velocity

Reusable orchestration patterns, context packaging, and release-ready workflows shorten the path from prototype to production without rebuilding the operating layer for each use case.

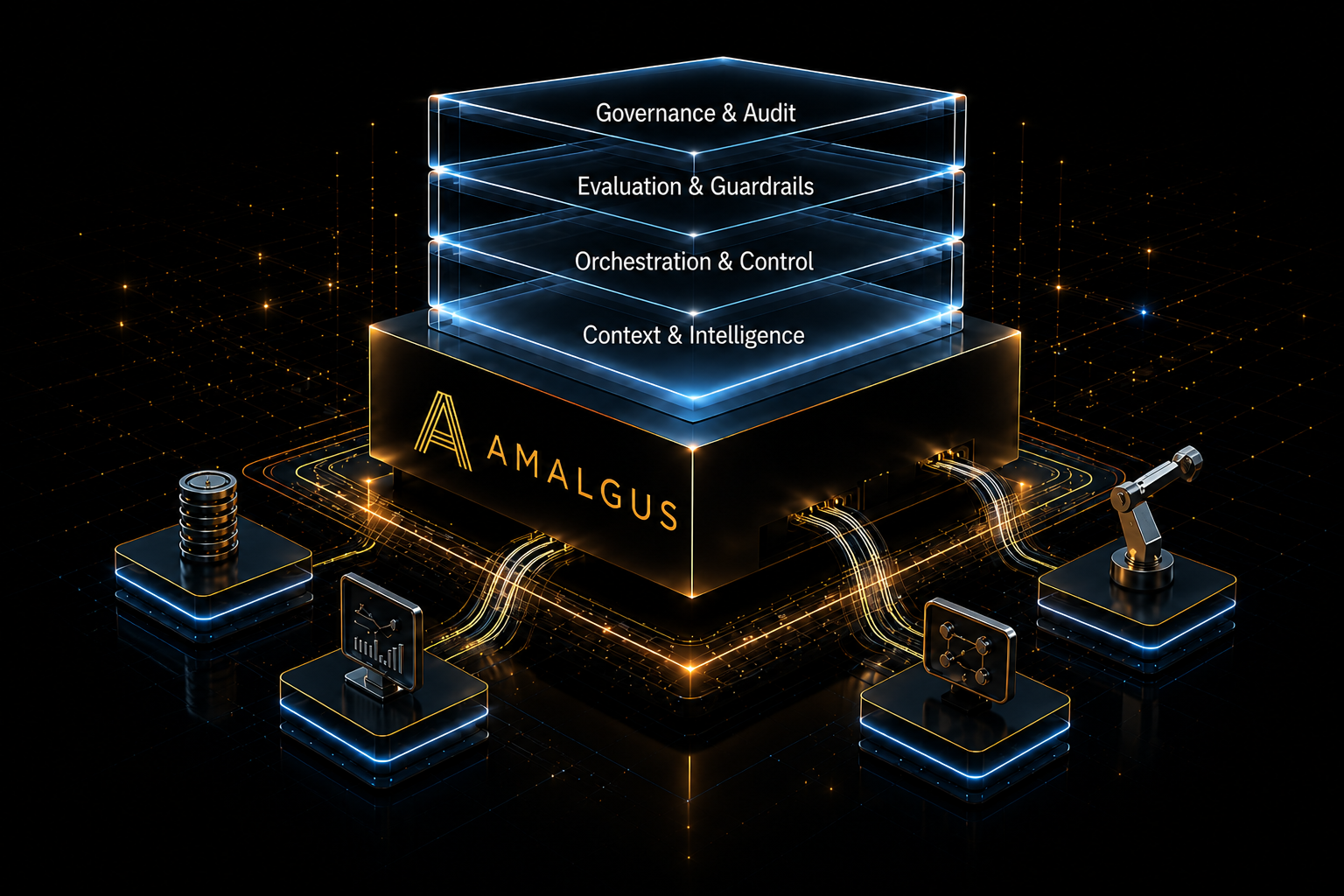

Amalgus designs and deploys production-grade AI infrastructure for organizations moving beyond demos into systems that must operate, adapt, and be trusted under real-world conditions.

We enable our customers to succeed by engineering the operating layer around AI workload orchestration, context control, evaluation, telemetry, governance, and deployment discipline across enterprise, industrial, and autonomous environments.

Built for AI workloads where reliability, traceability, governance, and operational accountability matter.

A capable model is only one component of a working system. Production performance depends on how context is selected, what actions are authorized, how workflows are executed, how outputs are evaluated, and how exceptions are contained.

Amalgus enables customers to move from isolated AI capability to governed operation by coordinating the operating layer around execution, controls, and continuous oversight.

Reusable orchestration patterns, context packaging, and release-ready workflows shorten the path from prototype to production without rebuilding the operating layer for each use case.

Policies, tool permissions, evidence requirements, evaluation gates, fallback paths, and review thresholds keep AI workloads inside explicit authority, safety, and risk boundaries.

Every workload can be traced across context selection, model behavior, tool calls, approvals, exceptions, cost, latency, and outcomes to support audit, tuning, and continuous improvement.

The sandbox is forgiving; production is not. Amalgus helps organizations convert AI ambition into deployed systems with clear boundaries, measurable performance, runtime visibility, and governance built directly into the execution layer.